|

Fire up those chemokines! Created this sequence (concept to completion) in 5 days given only an audio clip & general script guidelines.

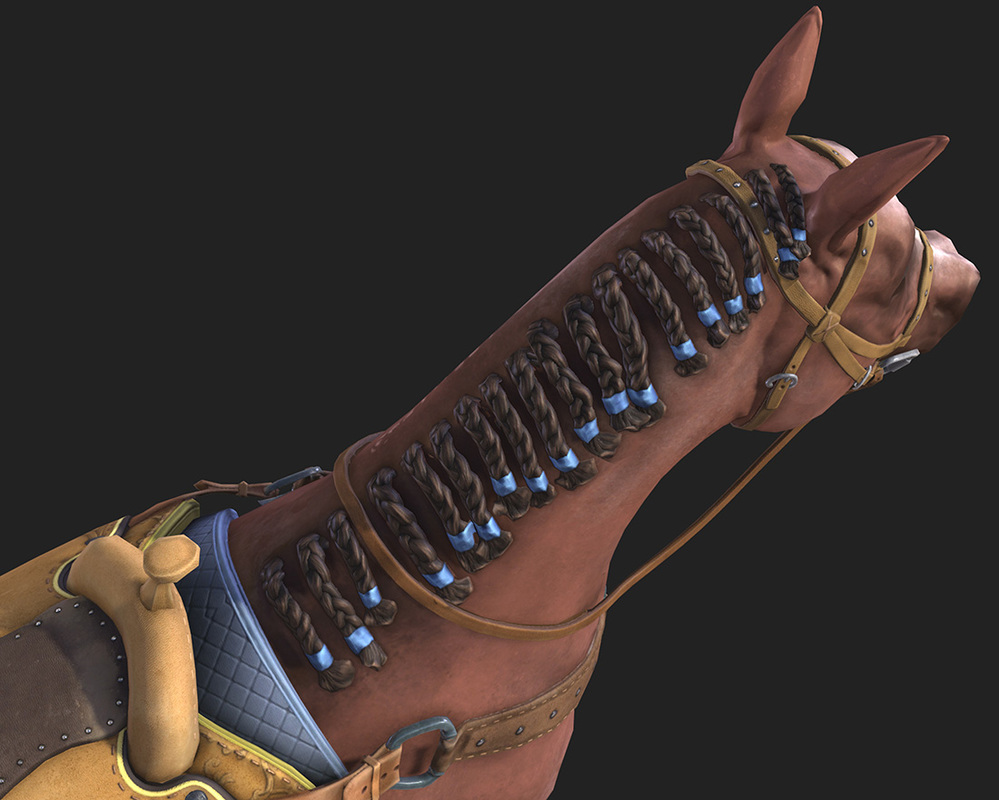

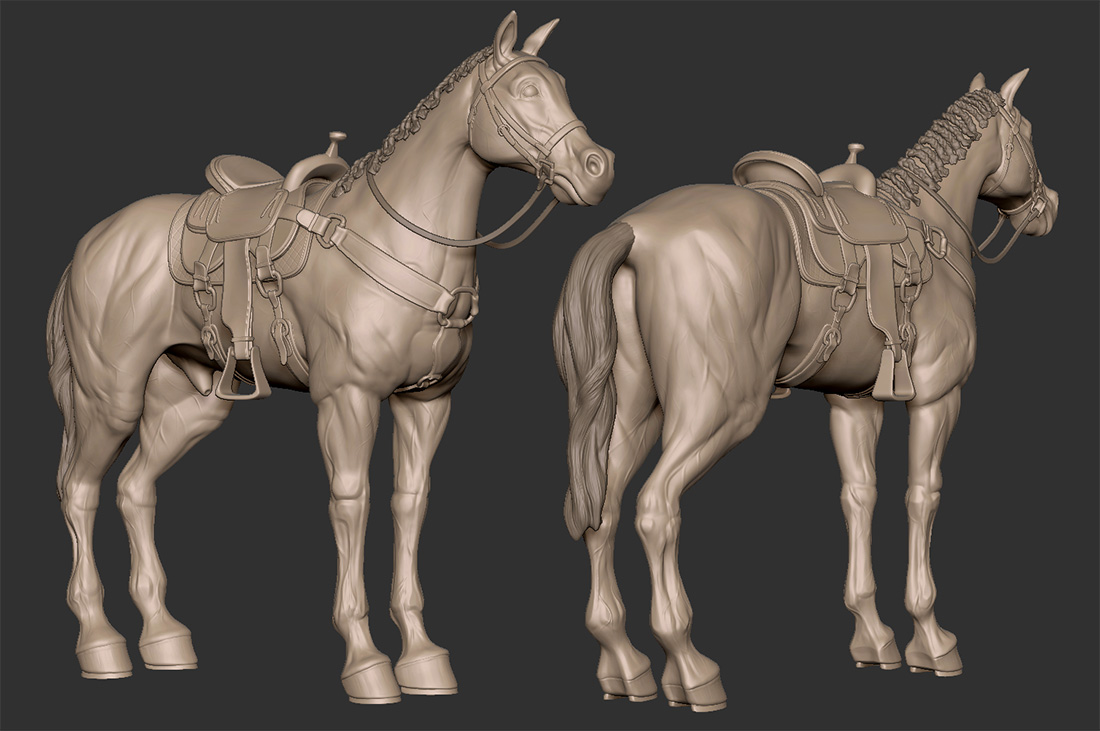

Ni No Kuni game fan art! I imagine this is how a Familiar would look in the current generation of realtime graphics.

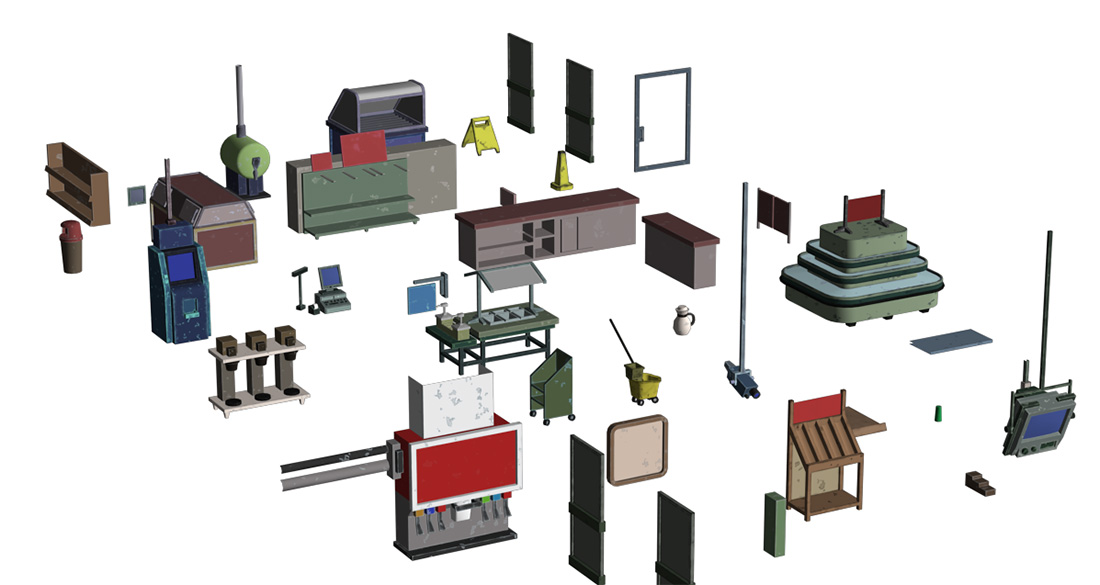

Sadly the Corner Store was cut from Surviving Independence so I'm posting a couple screen captures to archive the level. There wasn't enough production time to add the unique gameplay and scenarios for this level. One video shows the completed base modeling and the other shows nearly final environment art.

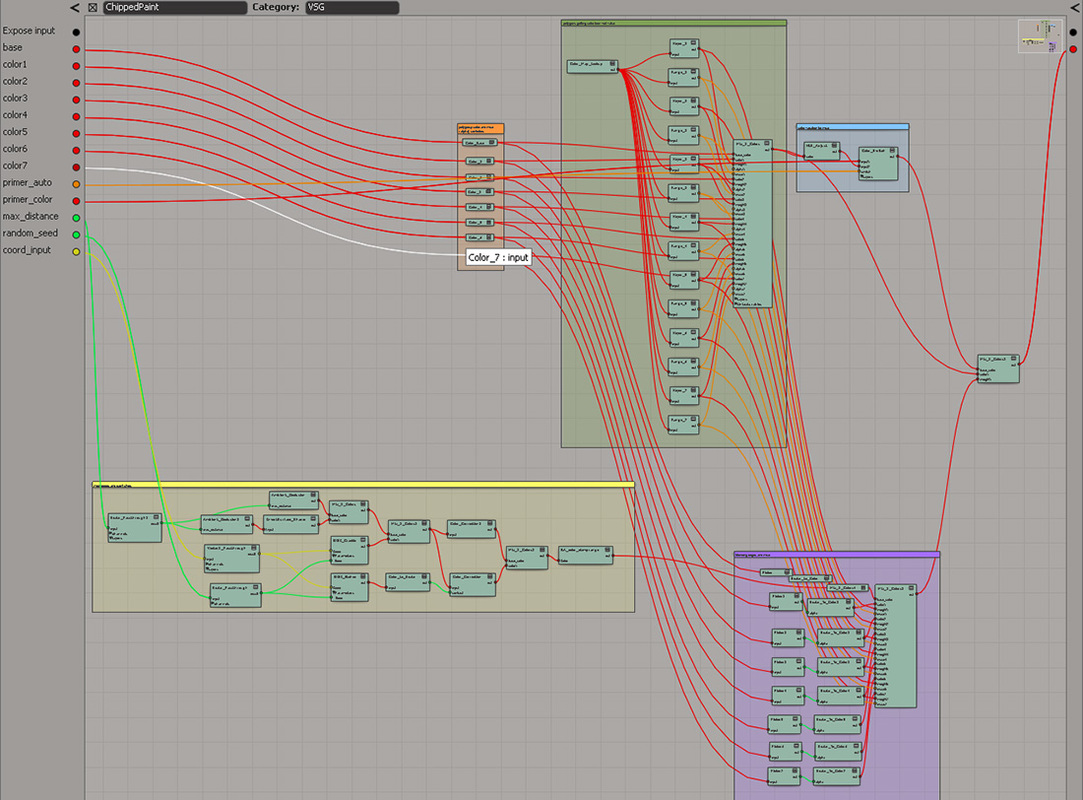

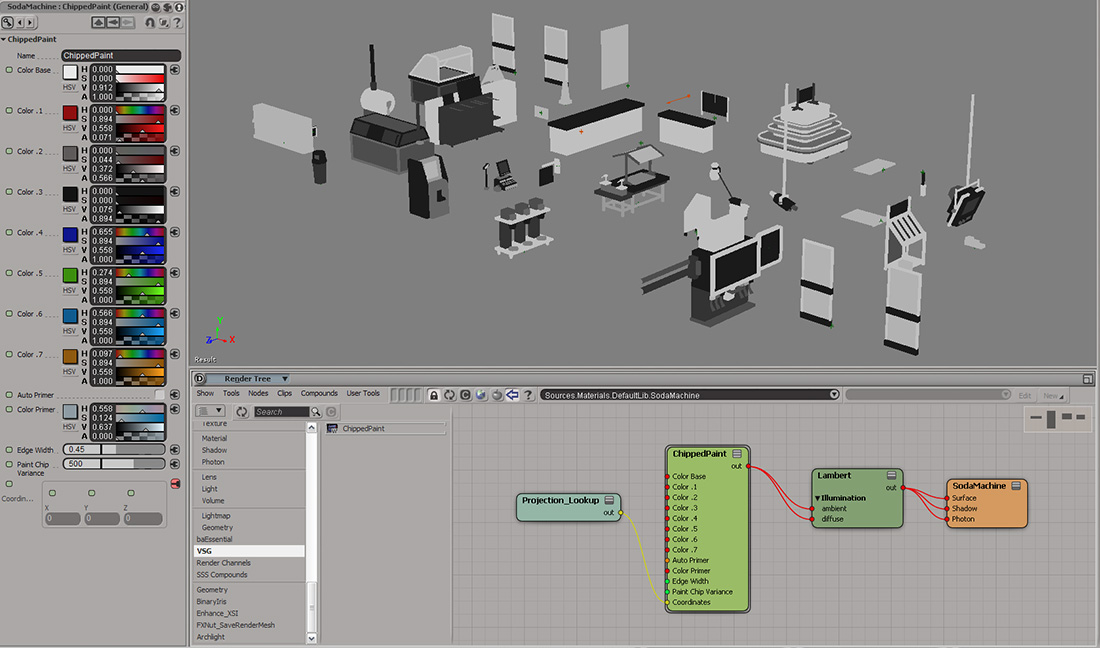

Independence has over 500 models & 1000 textures in it. As the only artist on the game, I had to develop fast production techniques. Knowing I wouldn't be able to hand-paint most of the textures, I created an uber shader network. The shader looked-up vertex values I would flood fill onto polygons while modeling. The shader then applied the appropriate base colors for the level theme and added some color variation , edge wear, & damage. I could then bake all the level textures with a single push of a button. This texturing method was similar to a Substance Designer workflow, but lightweight and without having to leave & return to the base 3d application. Below are screen captures showing this technique applied to props in the Corner Store. While this level was cut from the game, this method helped ship the other 37 levels!

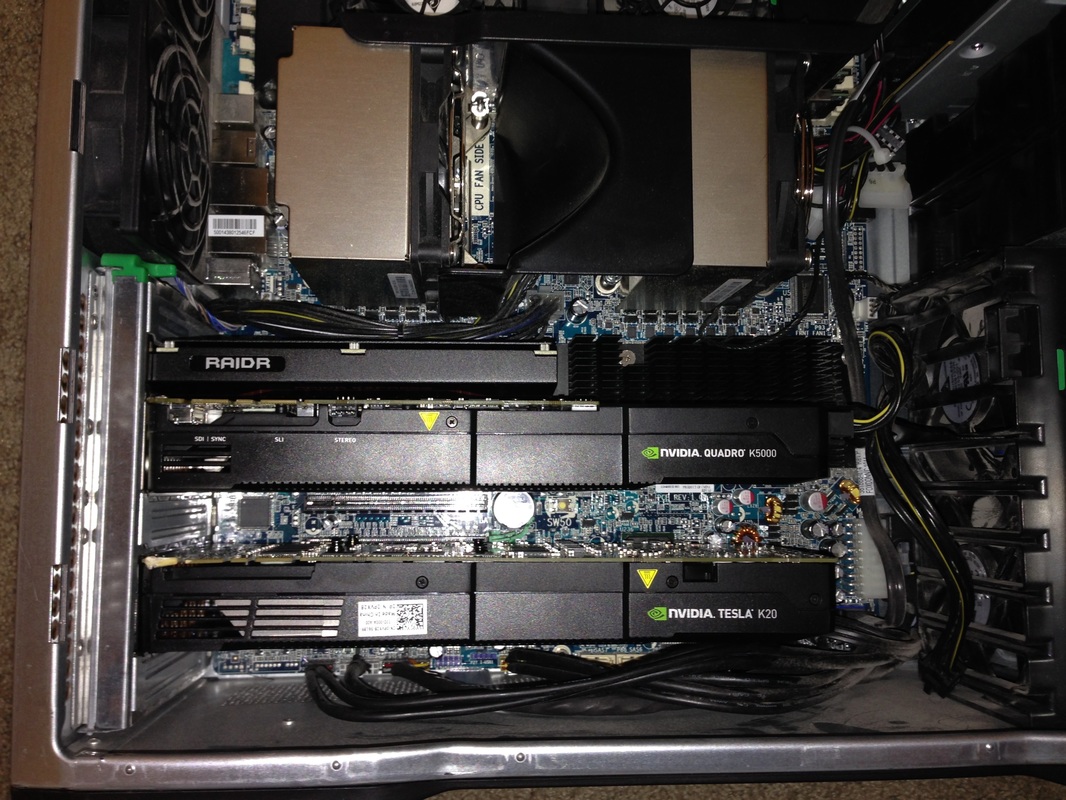

Rendering has always been a black-art with render engines of the past. With Mental Ray, combining deformation motion blur with ambient occlusion was a recipe for disaster. On the GPU, it's no problem at all for Redshift.

Here is a Redshift vs Mental Ray sequence test. Cheats and workarounds for computationally expensive processing like global illumination, motion blur, depth of field, and refractions/reflections are a thing of the past!

Took a chance buying a new, but cosmetically damaged Tesla k20c off of Ebay. Had a small ding on one corner so I got it for pennies on the dollar. Works perfectly! Being a headless GPU compute card, I can change the motherboard bios to TCC (Tesla Compute Cluster) mode letting the hardware run without any overhead from MS Windows. In TCC mode my test renders were 50% faster, so it's worth the trouble to see if your headless GPU can run in TCC mode; Titans can... possibly others as well. Combined with the Quadro k5000 the nVidia drivers go into Maximus mode. Redshift screeeeeams!

Redshift GPU computing on the Reading Nook scene. This used to take hours to render with Mental Ray.

Autodesk is ending development and support of Softimage in 2016. The end of an era. I look forward to the new players this will bring to the DCC space.

I came up with a clever way to solve transitions to different areas of the city via subway (and bus) and thought I would share. The background tracks & subway cars scroll in a loop while the interior is static. I added a bit of shake animation to the game camera to sell the illusion. Below you can see how this transition level works in-game.

|

Derek Jenson Blog

Resume

Endorsements Contact Form My website serves to archive experiments, document projects, share techniques, and motivate further exploration & artistry in 3d space. Archives

June 2020

Categories

All

|