|

I received a pile of scrap metal from a friend. Wanted to build a railing but had to meet city code with the scrap metal given to me; was challenging because no single remnant was long enough to meet the height requirement. I came up with this offset-45 design as a solution. Happy how it turned out!

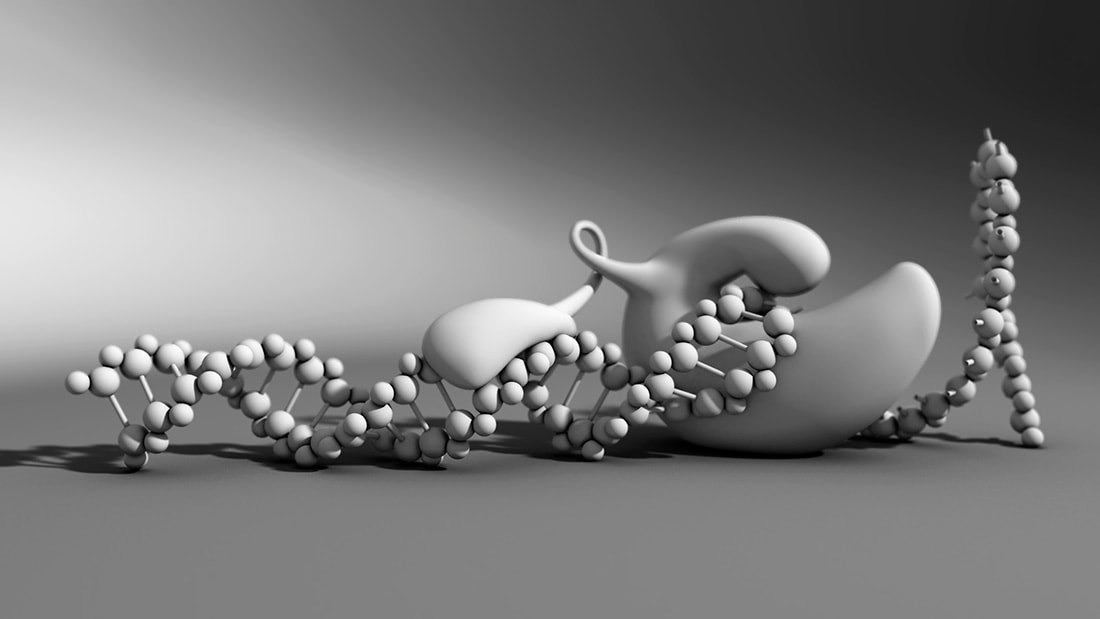

Some of my models 3d printed. Surreal to hold something in the physical world which previously only existed in digital space. Very cool. These two are made from Nylon.

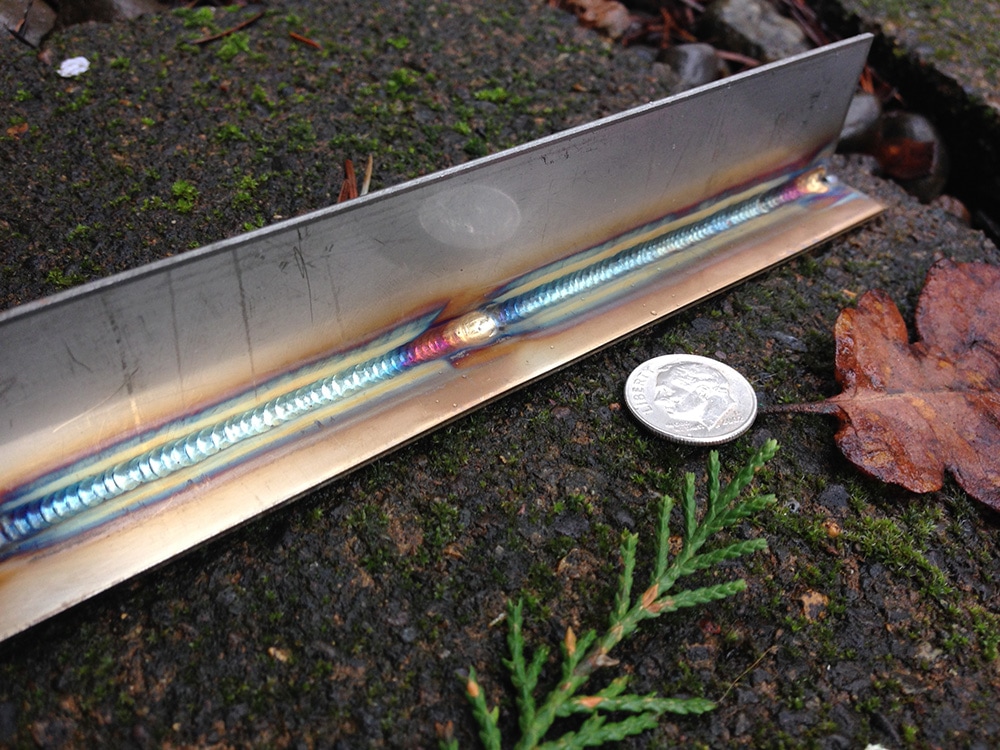

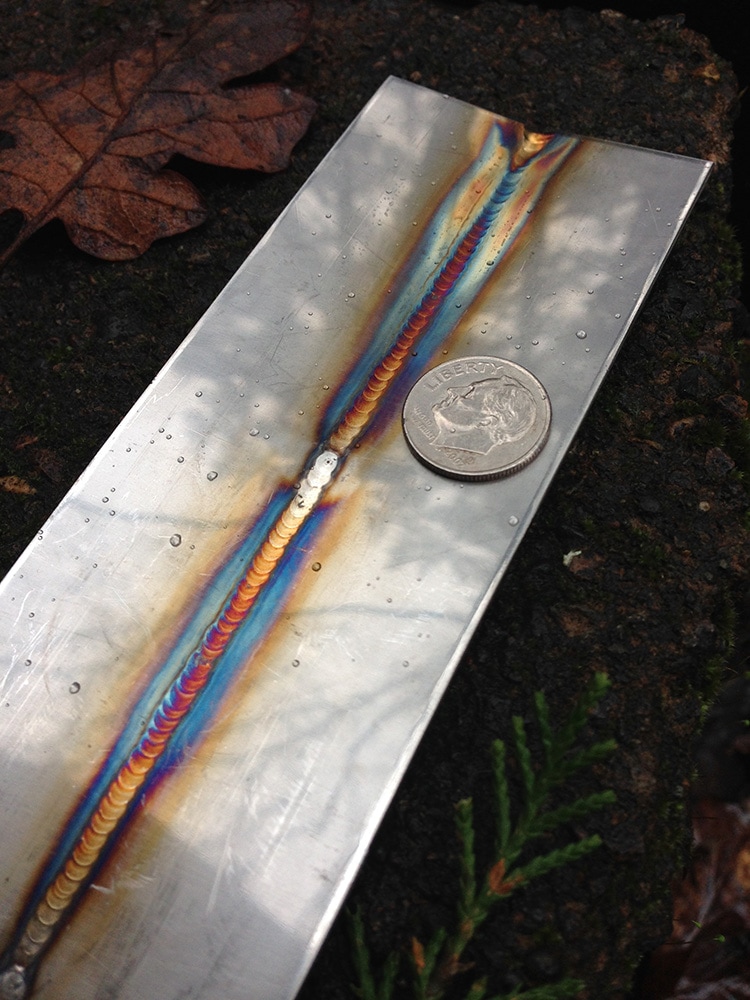

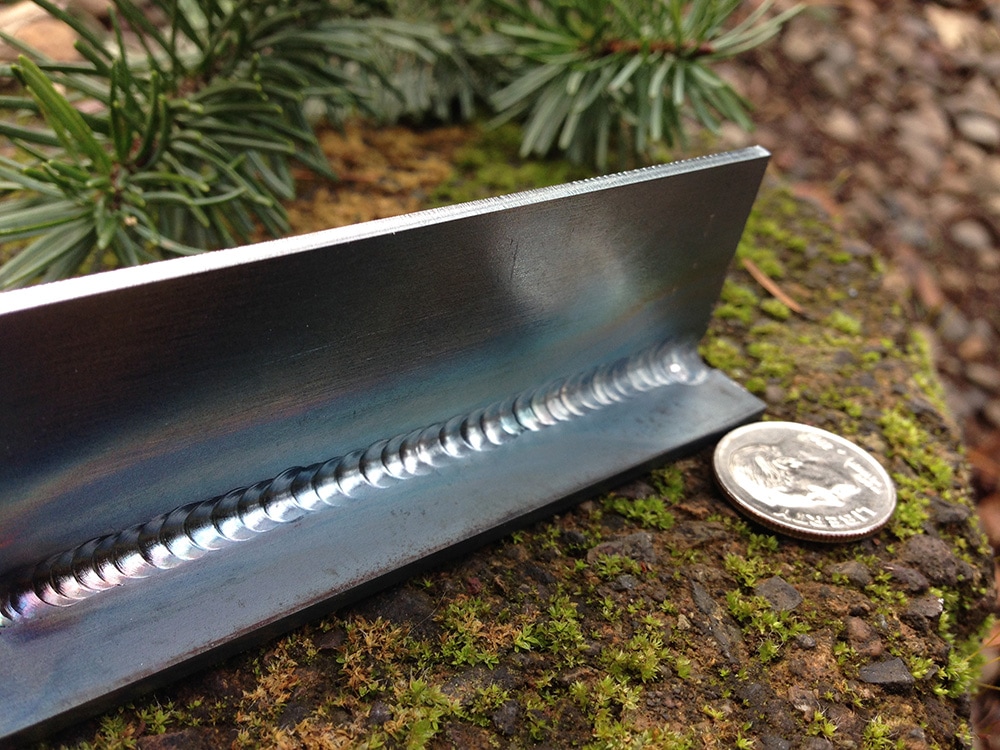

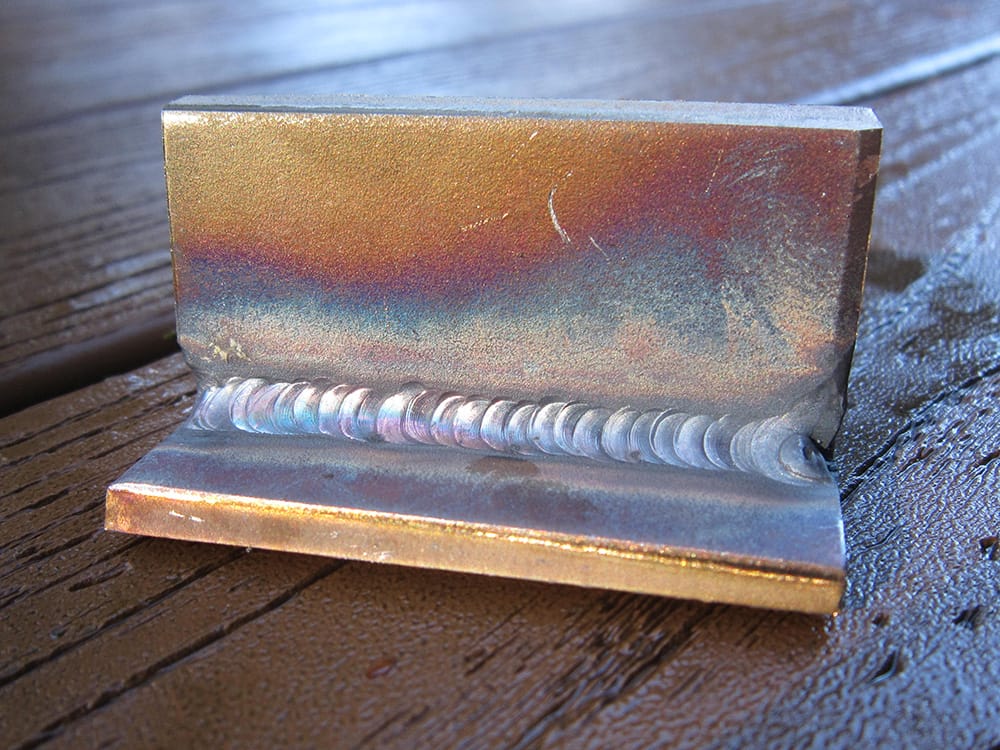

Stainless welds on various joint types. This material is .055 inches (sub 1.4 millimeters) thick. A true test in control.

I took the 12 week, very excellent, Weld School course at WW NDT Services. The training is intensive and covers a lot of ground in a short period; MIG, TIG, Stick, and Flux-Core on carbon steel, stainless steel, and aluminum. Earned 3G, 4G, & 6G AWS D1.1 certifications in Stick and Dual-Shield.

|

Derek Jenson Blog

Resume

Endorsements Contact Form My website serves to archive experiments, document projects, share techniques, and motivate further exploration & artistry in 3d space. Archives

June 2020

Categories

All

|