|

Fire up those chemokines! Created this sequence (concept to completion) in 5 days given only an audio clip & general script guidelines.

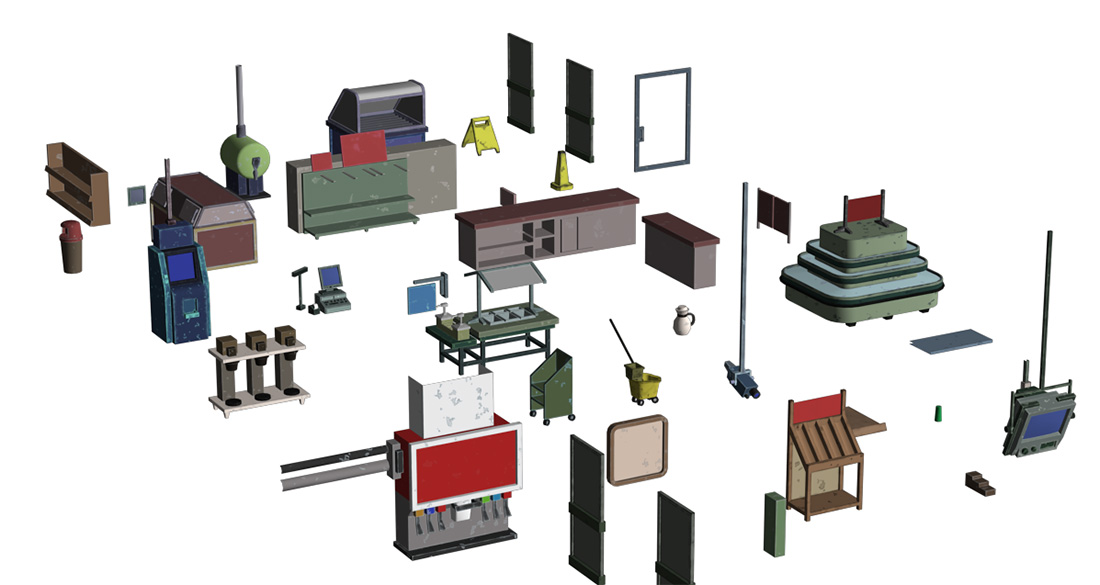

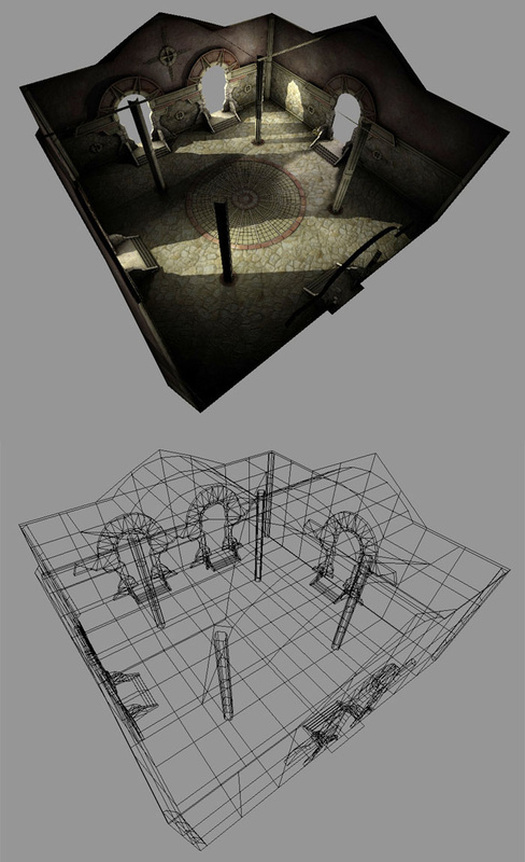

Sadly the Corner Store was cut from Surviving Independence so I'm posting a couple screen captures to archive the level. There wasn't enough production time to add the unique gameplay and scenarios for this level. One video shows the completed base modeling and the other shows nearly final environment art.

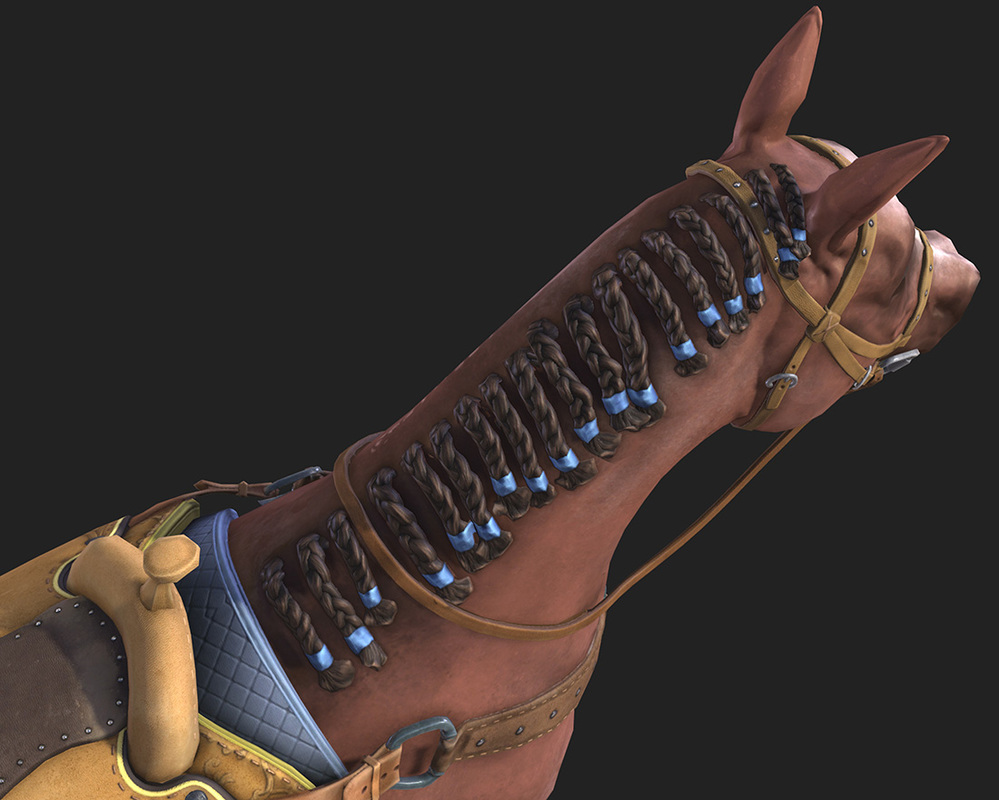

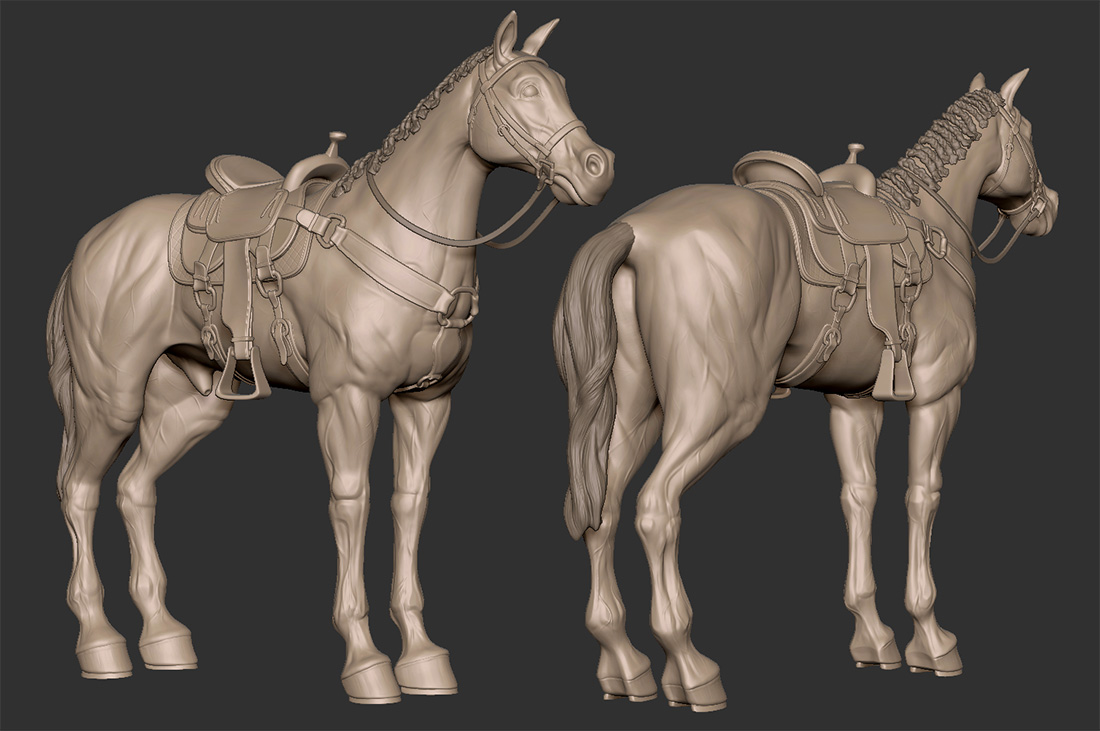

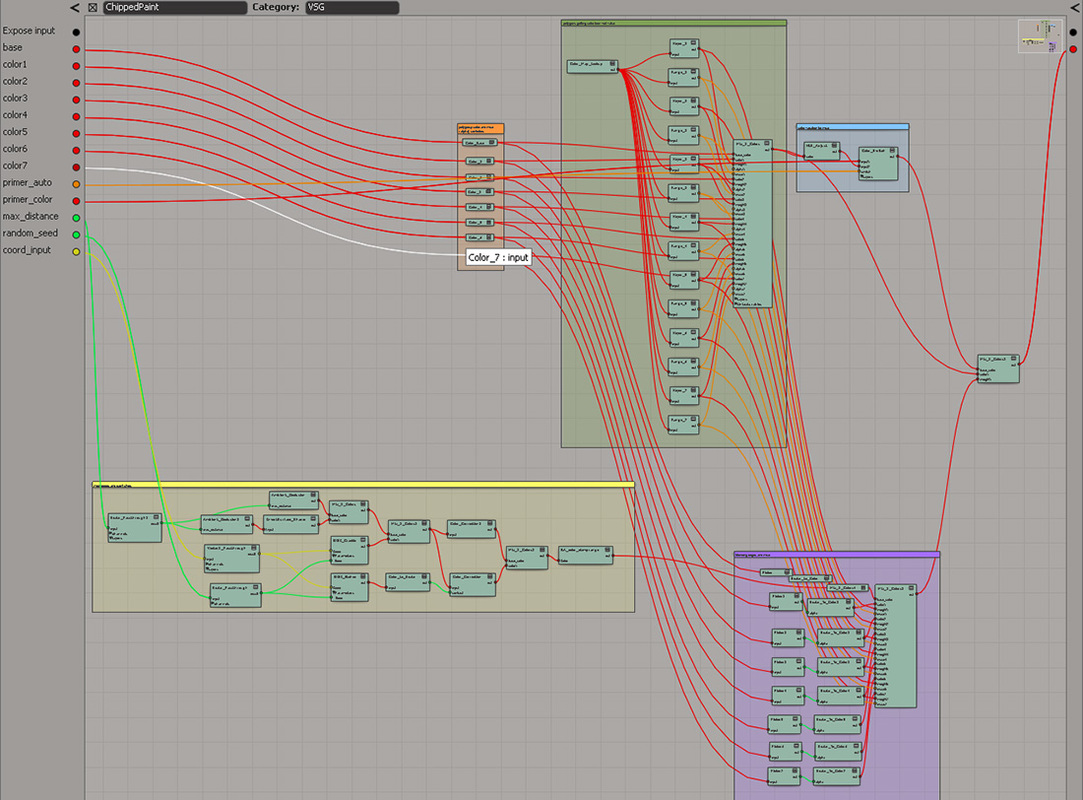

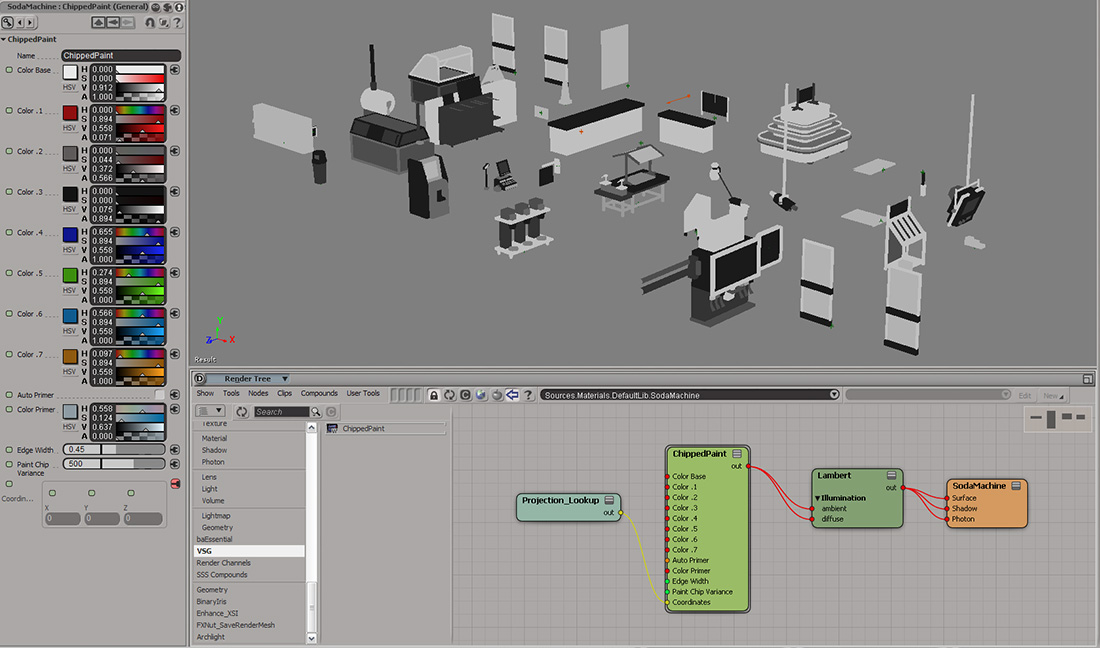

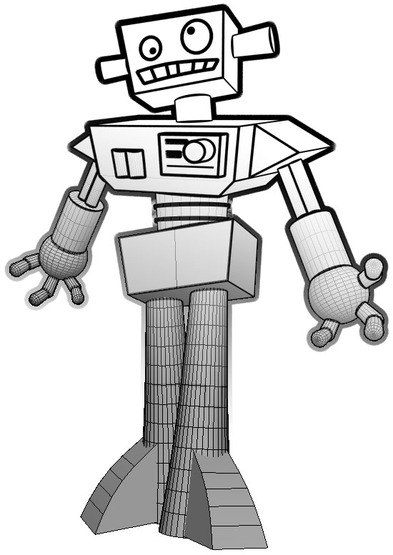

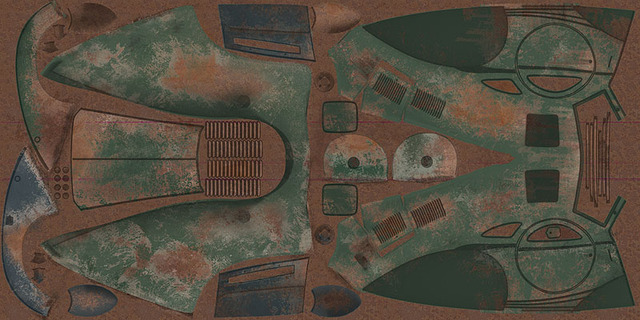

Independence has over 500 models & 1000 textures in it. As the only artist on the game, I had to develop fast production techniques. Knowing I wouldn't be able to hand-paint most of the textures, I created an uber shader network. The shader looked-up vertex values I would flood fill onto polygons while modeling. The shader then applied the appropriate base colors for the level theme and added some color variation , edge wear, & damage. I could then bake all the level textures with a single push of a button. This texturing method was similar to a Substance Designer workflow, but lightweight and without having to leave & return to the base 3d application. Below are screen captures showing this technique applied to props in the Corner Store. While this level was cut from the game, this method helped ship the other 37 levels!

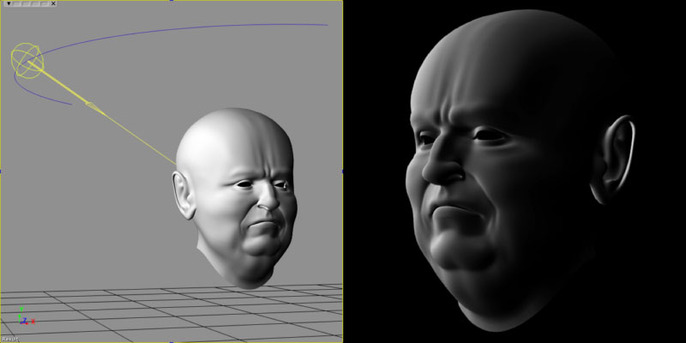

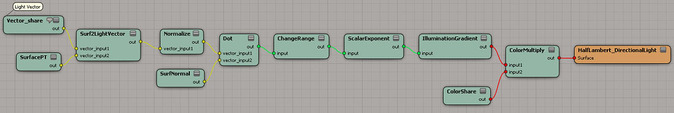

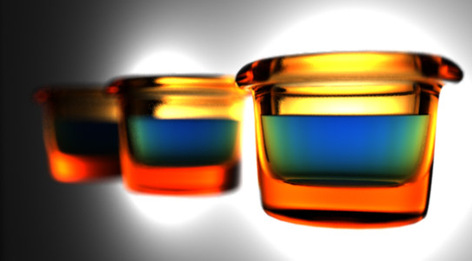

Wired up a Half-Lambertian shader. It's a nice technique for simulation slight subsurface light scattering or very rough objects where light energy would be transmitted from it's hit location to nearby matter. Nice soft fall-off made famous in this Valve paper.

I've uploaded a preset for this material. Note that this material uses a vector share node (I've commented it) to calculate shading; not a light.

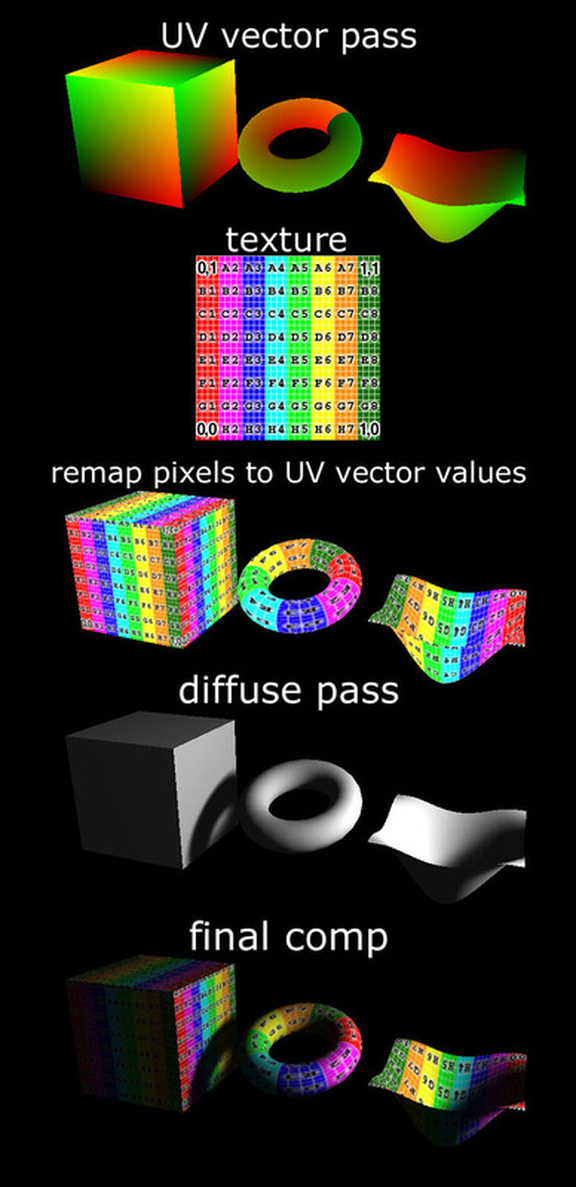

Many game engines are using deferred rendering these days as opposed to forward rendering. In addition, many movie shots have been authored with deferred techniques and then assembled and shaded in Nuke. The concept for both real-time engines and offline renderers is basically the same; to encode 3d data into 2d space (buffers) and then solve for the lighting/shading as a post process. If you are interested in lighting/shading in post, check out the Postlight tool by Andy Nicholas.

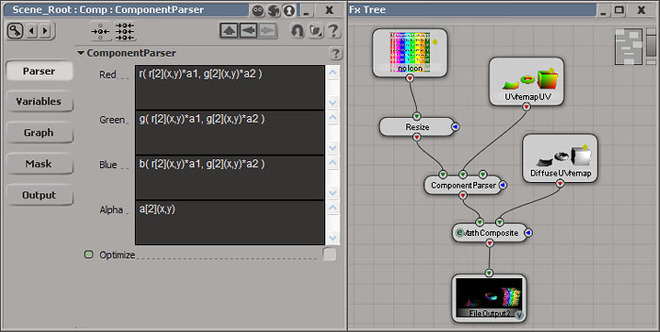

This deferred texture mapping test is a similar idea and mimics thistool by RevisionFX. For offline rendering, this additional UV vector pass can save re-rendering an image/animation by allowing me to swap textures after rendering is complete.

The Component Parser is the compositing node used to map the texture to the UV vector data. The variable a1 = horizontal pixel count of the image and variable a2 = the vertical pixel count.

Objects can be easily textured in post. Swapping textures is real-time without the need to re-render.

Using the toon shaders for illustrative renders. I made some line art, burned some screens, & printed the kiddos some cool custom T-shirts!

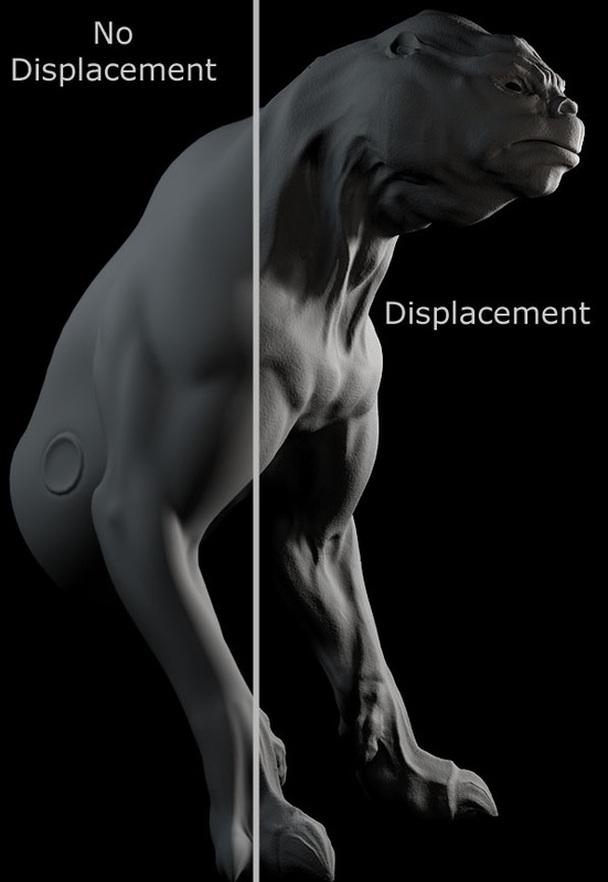

Displacement test with the 3delight renderer.

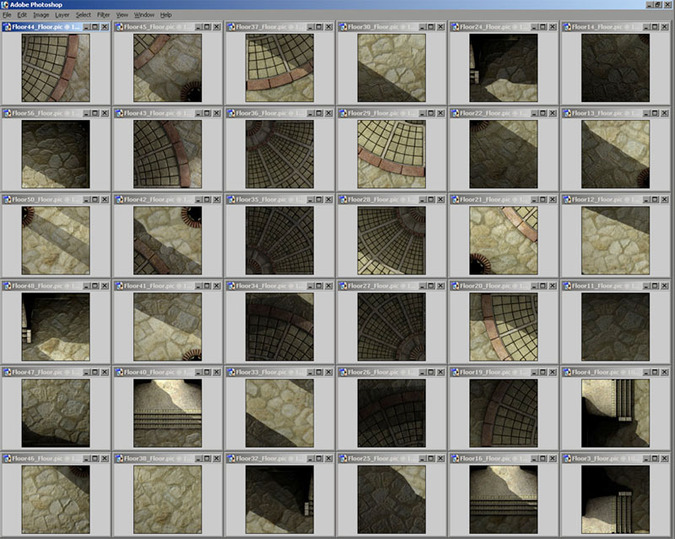

Streaming many 128 x 128 pre-baked textures on a consumer mobile device to push very highend looking graphics on limited hardware. Textures are loaded based on the viewers location; a 'poor man's' mega-texturing system. Fun to use all the cheats and lessons learned from the past to manipulate the phone's capabilities. |

Derek Jenson Blog

Resume

Endorsements Contact Form My website serves to archive experiments, document projects, share techniques, and motivate further exploration & artistry in 3d space. Archives

June 2020

Categories

All

|

||||||