|

Using the toon shaders for illustrative renders. I made some line art, burned some screens, & printed the kiddos some cool custom T-shirts!

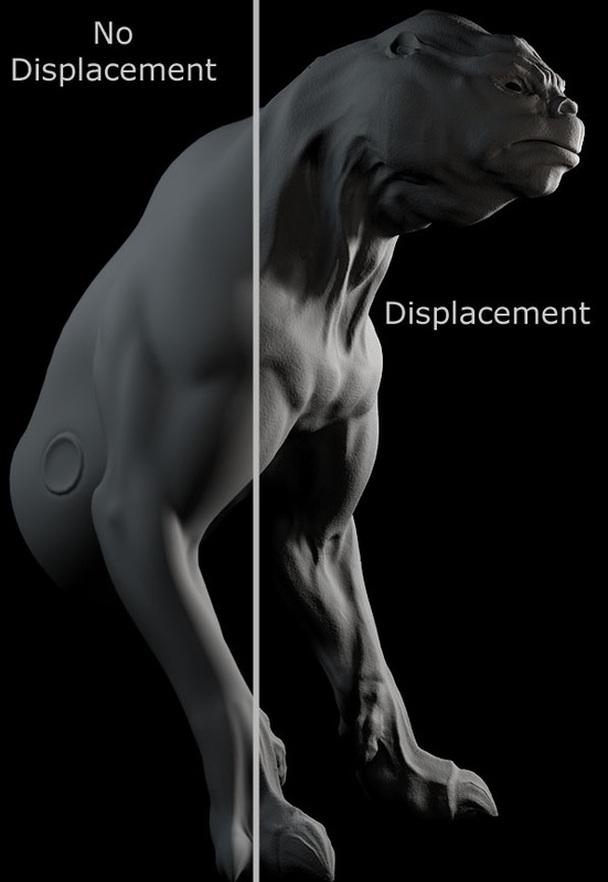

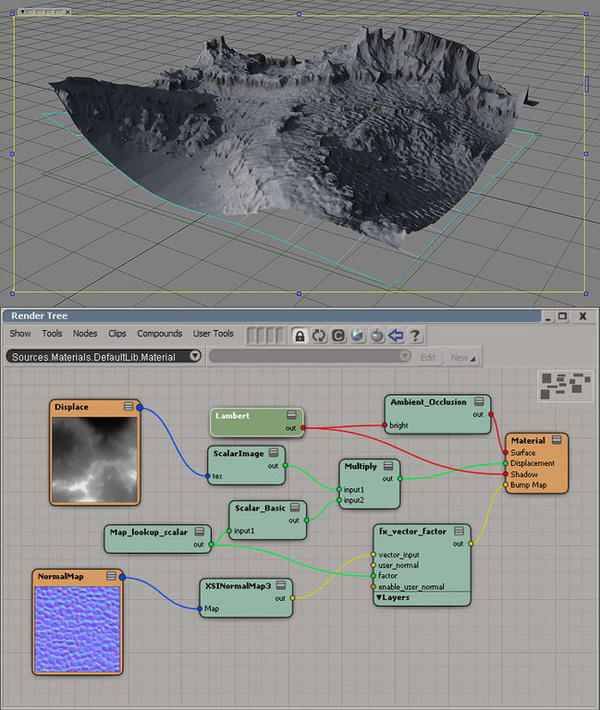

Displacement test with the 3delight renderer.

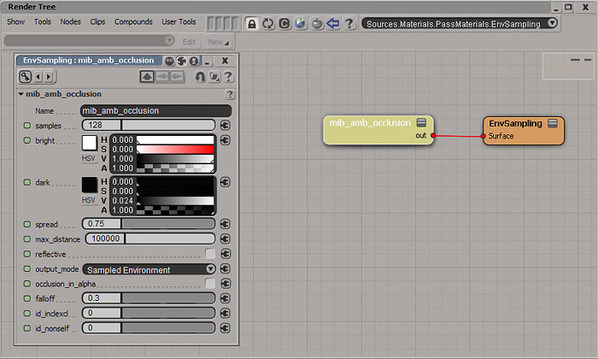

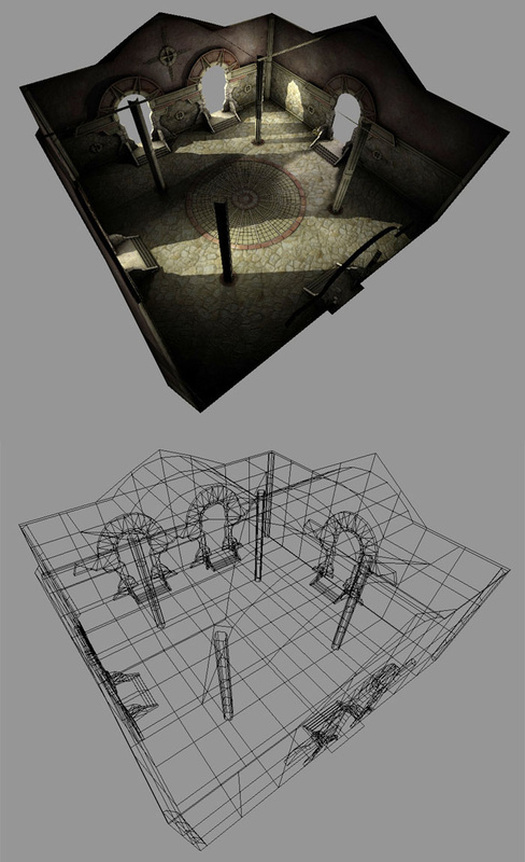

There is a lot of flexibilty, production time-savings, and specific look development which can be gained by building lightmaps (for realtime engines) in passes. Using mental ray, these passes are fairly easy to setup and bake. However, while final gathering (FG) works great (result-wise) for radiosity sampling, it is only single threaded when used for rendermapping. Seeing as how FG is a screen space method for sampling, I don't know if this can be called a bug (definitely an oversight). This limitation is made worse when several computers are used via satellite rendering to generate a single lightmap (or lightmap pass). The other systems sit idle, while one machine (using one core) calculates the FG prepass. To avoid this production slow down and get your systems rendering with full CPU power, an environment sampling shader can do the work FG would normally calculate. Using mental ray's ambient occlusion shader with the below parameters, I generated the environment sampling with satellite rendering using 100% of 24 cores. Use this sampling method with interactive IBL light domes for a fast and iterative lighting solution.

Here is the collection I use the most; feel free to download the .zip file. I took the liberty of giving the IES files artist friendly names. Using IES profiles to scatter indirect light energy into a scene gives a certain legitimacy to the render; even if the profile isn't used for light scatter effects directly. You can accomplish indirect scatter by setting up photon only lights with an IES profiles.

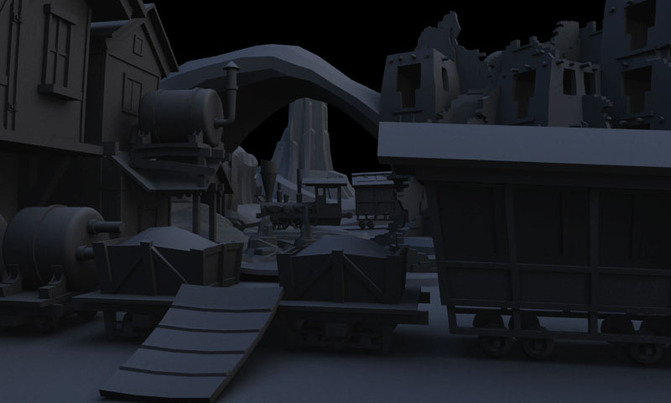

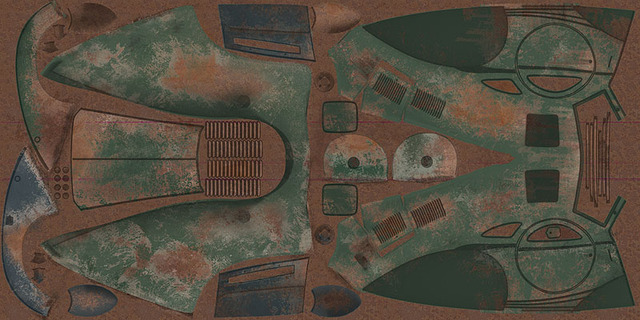

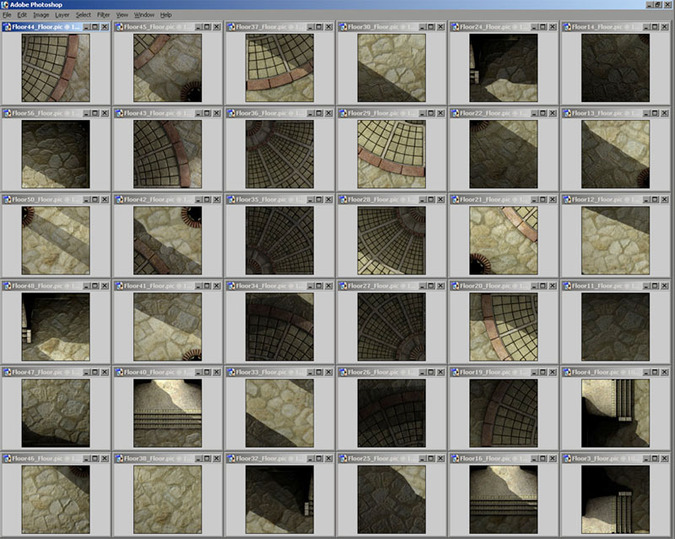

Streaming many 128 x 128 pre-baked textures on a consumer mobile device to push very highend looking graphics on limited hardware. Textures are loaded based on the viewers location; a 'poor man's' mega-texturing system. Fun to use all the cheats and lessons learned from the past to manipulate the phone's capabilities.

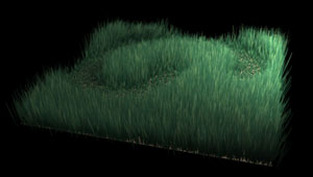

Having a compositor built into a 3d program offers a new way to drive any parameter in the 3d app. Softimage (as well as Blender & Houdini) has an intergrated compositor. This tool opens many possiblilties. Here I used a Vector Paint node in Softimage's compositor to drive the value of a CutMap parameter on hair (grass). The effect works perfectly and can be tuned interactively in a 3d viewport.

If you are a Softimage user, download the supplied scene file below to view the setup:

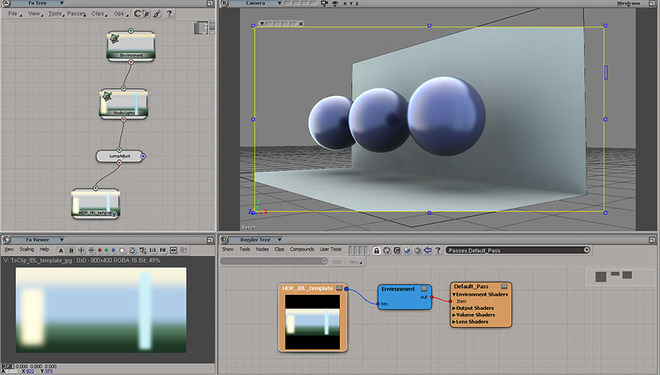

Using an intergrated compositor (Blender, Softimage, Houdini) it is possible to create an interactive studio environment to drive the IBL in a 3d scene. By wiring a couple vector paint nodes together in Softimage I can create an interactive environment map; then I use the map as the source image for the final-gathering or environment sampling. HDR Light Studio is a standalone product that does just that. Read about the setup in Softimage here. This environment sampling setup was used in two, full-scale production, Tony Hawk video game projects.

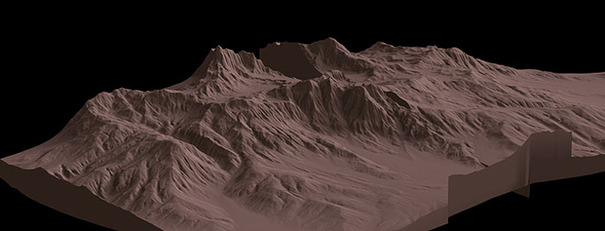

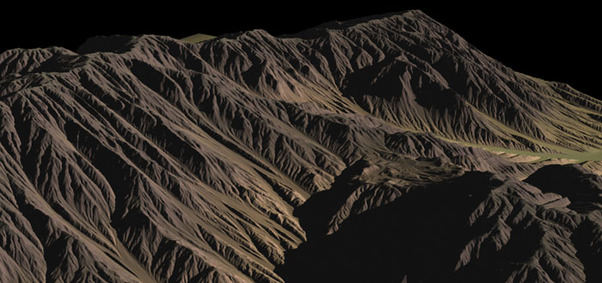

A few terrain displacement tests. Used 3delight and mental ray to achive these results. The mental ray renders were enhanced with normal maps. Bump mapping wasn't necessary for rendering with 3delight; displacement results hold high detail with low render times. If you use CrazyBump or Xnormal to generate normal maps, I find this shader very helpful for the tuning process. It removes the need to 'tweak' parameters in a separate app. The author's (Felix Reichert) site is here.

|

Derek Jenson Blog

Resume

Endorsements Contact Form My website serves to archive experiments, document projects, share techniques, and motivate further exploration & artistry in 3d space. Archives

June 2020

Categories

All

|

||||||||||||